cricketbird

Registered User.

- Local time

- Today, 03:39

- Joined

- Jun 17, 2013

- Messages

- 124

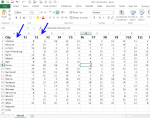

I would like to import (using an append query) data in the following format:

Sample Name Carbon Nitrogen Oxygen Hydrogen

Sample 123 10 20 30 40

Sample 234 15 54 25 60

to a table in my database with the following columns:

RecordID (autonumber primary key)

SampleID (foreign key to table Samples)

AnalysisID (foreign key to table Elements)

AnalysisValue

I usually use query builder, but it doesn't seem able to "flatten" the data in this way.

Can SQL handle this? Or can anyone think of a different approach than using queries?

End result: With the above example data, I want generate the following records to append:

RecordID Sample ID AnalysisID AnalysisValue

1 123 1 10

2 123 2 20

3 123 3 30

4 123 4 40

5 234 1 15

6 234 2 54

7 234 3 25

8 234 4 60

Thanks,

CB

Sample Name Carbon Nitrogen Oxygen Hydrogen

Sample 123 10 20 30 40

Sample 234 15 54 25 60

to a table in my database with the following columns:

RecordID (autonumber primary key)

SampleID (foreign key to table Samples)

AnalysisID (foreign key to table Elements)

AnalysisValue

I usually use query builder, but it doesn't seem able to "flatten" the data in this way.

Can SQL handle this? Or can anyone think of a different approach than using queries?

End result: With the above example data, I want generate the following records to append:

RecordID Sample ID AnalysisID AnalysisValue

1 123 1 10

2 123 2 20

3 123 3 30

4 123 4 40

5 234 1 15

6 234 2 54

7 234 3 25

8 234 4 60

Thanks,

CB